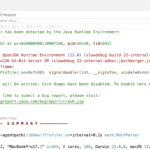

The changes I described in this blog post led to segfaults in tests, so I backtracked on them for now. Maybe I made a mistake implementing the changes, or my reasoning in the blog post is incorrect. I don’t know yet.

In the last blog post, I wrote about how to size the request queue properly and proposed the sampler queue’s dynamic sizing. But what I didn’t talk about in this or the previous blog post are two topics; one rather funny and one rather serious:

- Is the sampler queue really a queue?

- Should the queue implementation use Atomics and acquire-release semantics?

This is what we cover in this short blog post. First, to the rather fun topic:

Is it a Queue?

I always called the primary data structure a queue, but recently, I wondered whether this term is correct. But what is a queue?

Definition: A collection of items in which only the earliest added item may be accessed. Basic operations are add (to the tail) or enqueue and delete (from the head) or dequeue. Delete returns the item removed. Also known as “first-in, first-out” or FIFO.

Dictionary of Algorithms and Data Structures by Paul E. Black

But how does my sampler use the sampler queue?

It queues elements to the end of the data structure but doesn’t use a dequeue operation. Instead, the draining code walks over the whole Queue and clears it afterwards. So, we only implement half of the queue semantics.

So this is rather a request buffer. JFR uses the same name for its main event data store, in which events are submitted/enqueued and then periodically processed and removed.

So why did I use the term queue? Historical reasons also tie into the overuse of atomics: Previously, the Queue was a lock-free data structure that supported concurrent enqueuing and dequeuing to improve performance. But in the version that got into the OpenJDK, the Queue is only accessed after acquiring the queue lock (see my implementation blog post).

I’ll still use the term queue because changing all the names is probably not worth it. Remember that it has buffer semantics (or an append-only queue if you want to be confusing).

Why is the Queue only accessed under a Lock?

The Java thread can’t run Java code while walking the stack. Waiting on a lock at the safepoint, which happens at the end of every native method before rerunning Java code, ensures that no Java code is running parallel with the out-of-stack thread sampler.

Synchronizing the signal handler and drainage code is not strictly required and is also not really expensive. It allows us to simplify the code, as we can drain all elements in the Queue simultaneously.

Synchronization is not required because, for one, when the thread is at the safepoint, the current thread state is in VM, so the signal handler can’t process the stack anyway. Also, out-of-stack walking happens typically (in benchmarks like Renaissance) in so few instances that this is also not a problem in most cases.

As a side note: Acquiring the lock in the signal handler is not problematic, as the signal handler never waits to get the lock. The lock acquisition also prevents re-entrance, which makes the code easier.

And of course, the typical queue size is just one, so the drainage code doesn’t hold the lock for long (see Java 25’s new CPU-Time Profiler: Queue Sizing (3)).

Reduced Synchronization in the Queue

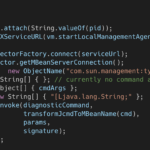

The Queue doesn’t need to be lock-free or concurrently accessible anymore, as a lock guards every access:

In fact, this is where the names come from: acquiring a lock implies acquire semantics, while releasing a lock implies release semantics! All the memory operations in between are contained inside a nice little barrier sandwich, preventing any undesireable memory reordering across the boundaries.

Acquire and Release Semantics – Preshing on Programming

Looking at the current code, you still find remnants of the lock-free implementation Andrei Pangin and I worked on before we changed the sampler to only access the Queue under lock. In the rush to get the JEP into JDK 25, we pushed the removal of the redundant synchronization and overly conservative memory semantics to a later date. We documented this intended removal in JDK-8358616.

Removing all the lock-free code should make the code easier to read and reason about, and a tiny bit faster.

The only place where we still need the acquire-release semantics and locks is the lost_samples property of the Queue, as it is also incremented whenever the signal handler fails to acquire a lock.

But of course, David Holmes already mentioned this improvement in a comment to the issue:

The synchronization mechanisms used are somewhat unclear. We have a logical tri-state spin-lock which is acquired through Atomic::cmpxchg and so is a full bi-direction barrier, and it is released with release semantics. But whilst that lock is held we also perform atomic updates on the queue – yet access to the queue should be serialized by the spin-lock. We then further use acquire/release every time we touch the queue `_head` (which is just an index) which is completely unnecessary in most (if not all) cases.

David Holmes comment on JDK-8358616

But I apparently missed when I started working on this blog post and the change.

Disadvantages

The alternative to removing the lock-free part of the Queue would be to make the signal handler queue requests in parallel with the out-of-stack sampler thread, thereby entirely using the advantage a lock-free queue gives us. However, we still need the lock to synchronize between the safepoint handler and the out-of-stack sampler.

As seen in Java 25’s new CPU-Time Profiler: Queue Sizing (3), the native worst case might lead to fairly long drainage pauses of 200ms, which would result in the loss of a significant number of events.

In my opinion, reducing complexity and possibly time when Java methods dominate the execution is worth foregoing the slight improvement in parallelism. For now, I propose to wait until we see that the native worst case actually occurs. We can continually improve the Queue and synchronization later.

Conclusion

In this blog post, I showed you there are still areas for improvement for my JEP. But with every change, the code gets a tiny bit better. The changes discussed here are significant because they simplify the queue code by taking advantage of the already present locking mechanism.

Thanks for reading so far in my blog series on improving the new CPU-time sampler for JFR, one blog post at a time. I’ll see you next week with a blog post on JFR’s startup messages.

This blog post is part of my work in the SapMachine team at SAP, making profiling easier for everyone. Thank you to Francesco Nigro for answering all my concurrency-related questions.

P.S.: I call my current style of development Blog post Driven Development (BDD).

P.P.S.: This is what different LLMs think about the queue naming (complete answers):

| Model | Verdict | Reasoning | Alternatives |

|---|---|---|---|

| GPT-4.1 | ✅ Queue is fine (SPSC linear queue) | Keep “Queue” or clarify as a linear SPSC queue | Enqueue exists but is consumed in batches, cleared at once. |

| Claude 3.7 | ❌ Misleading | No dequeue; direct indexing; batch-like. | …Buffer, …SampleLog |

| GPT-5 | ❌ Not really | No dequeue; drained/reset in bulk; resizable. | …Buffer, …SampleBuffer |

| Claude Sonnet 4 | ❌ Misleading | Keep “Queue” or clarify asa linear SPSC queue | …Buffer, …Array, …Log |

| Gemini 2.5 Pro | ✅ Reasonable | Keep “Queue” or clarify as linear SPSC queue | Could be …Buffer or …Collector |

P.P.P.S.: After a blogging hiatus, I found passion in writing blog posts again. So expect at least a shorter blog post at least every two weeks rather than big blog posts. The latter is more compatible with my day-to-day work and allows me to showcase the topics I’m already working on quickly.

P.P.P.P.S: You’re still here? Go outside, smell some flowers, and have a good time.