Ever had a tricky bug caused by a race condition or rare concurrency condition that was really hard to reproduce? It’s great when you have a fix that should work in theory, but without a reproducer, only time will tell whether your fix really worked. In this blog post, we’ll revisit my old blog post Hello eBPF: Concurrency Testing using Custom Linux Schedulers (19), and try to use the concurrency-fuzz-scheduler to reproduce a bug I fixed a while ago in the OpenJDK.

The scheduler aims to be as chaotic as possible; hence, Jake Hillion’s Rust version is called scx_chaos. But we’ll focus on the Java version, the concurrency-fuzz-scheduler, because it’s not only implemented in Java on top of my hello-ebpf library, but it’s also optimized for fuzzing Java applications, inserting random sleeps at the scheduler level with a focus on non-VM threads.

TL;DR: The concurrency scheduler is a nice tool to provoke rare parallelism conditions and create reproducers.

The bug in question is JDK-8366486, reported by David Holmes in August 2025: A test case that checks that we can run multiple recordings with the CPU-time sampler in direct succession does work. The only problem: The test should not work, but it still worked most of the time. If you’re only interested in the actual bug, skip ahead to the end of the blog post for an explanation.

You’ll find the fixed version here and the broken version here (because the old JDK with the actual bug had compilation issues on my current system, I had to reintroduce the bug in a separate branch).

Let’s start with running the test case with the standard Linux scheduler on a large machine, so that everything can run nicely in parallel:

Running the Test Case Normally

The test case is part of the test suite of the OpenJDK, so we can run the test via make:

make CONF=linux-x86_64-server-fastdebug test \ TEST=jtreg:test/jdk/jdk/jfr/event/profiling/TestCPUTimeSampleMultipleRecordings.java

This automatically builds the tests for us and then runs them with the jtreg runner. But we can also call jtreg directly, by using the command stored in build/linux-x86_64-server-fastdebug/test-support/jtreg_test_jdk_jdk_jfr_event_profiling_TestCPUTimeSampleMultipleRecordings_java/jtreg.cmdline. For simplicity, we store the command in a file called test.sh.

Let’s run this using hyperfine:

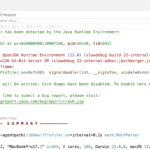

> hyperfine ./test.sh --runs 50 Benchmark 1: ./test.sh Time (mean ± σ): 25.093 s ± 13.168 s [User: 12.819 s, System: 1.656 s] Range (min … max): 10.828 s … 62.806 s 50 runs

So we expect these tests to run fairly quickly on average and run only rarely longer.

Running the Test Case with the Chaotic Scheduler

Let’s run the same test case using the chaotic concurrency-fuzz-scheduler:

> ./scheduler.sh ./test.sh --log --java --timeout 200 --sleep 0.1ms,10ms --run 0.1ms,10ms ... Iteration timed out Killing process Iteration Count: 11 Iteration Duration: mean=67.4s+-49.9s,min=22.0s,max=200.5s

It takes only a few minutes (usually around 10) to reach 200-second runtimes, making error reproduction far faster.

With a few minutes more, we can even reach a runtime of 380 seconds (running with --timeout 400):

Iteration Count: 29 Iteration Duration: mean=84.3s+-92.5s,min=18.6s,max=384.6s

Let’s run the fixed version of the test case for comparison:

Running the Fixed Test Case

The fixed version can run in a loop for literal hours with the chaotic scheduler, without any problems:

Iteration Count: 1119 Iteration Duration: mean=13.3s+-1.2s,min=11.4s,max=20.5s

When running it alone, we can see that the performance impact of the custom scheduler is not terrible:

hyperfine ./test.sh --runs 50 Benchmark 1: ./test.sh Time (mean ± σ): 5.805 s ± 0.047 s [User: 13.321 s, System: 1.660 s] Range (min … max): 5.727 s … 6.003 s 50 runs

But we can surely improve it.

The Bug

Let’s break down the issue in the test case by taking a look at the code:

public class TestCPUTimeSampleMultipleRecordings {

static volatile boolean alive = true;

public static void main(String[] args) throws Exception {

// start a thread that spends time on the CPU

Thread t = new Thread(TestCPUTimeSampleMultipleRecordings::nativeMethod);

t.start();

for (int i = 0; i < 2; i++) {

try (RecordingStream rs = new RecordingStream()) {

// enable the CPU-time sampler to record a sample per thread

// every milli-second of CPU-time

rs.enable(EventNames.CPUTimeSample).with("throttle", "1ms");

rs.onEvent(EventNames.CPUTimeSample, e -> {

// when get our first sample, we quit the recording

alive = false;

rs.close();

});

// we start the recording, this calls only terminates when

// the recording is stopped

rs.start();

}

}

alive = false;

}

public static void nativeMethod() {

while (alive) {

JVM.getPid();

}

}

}

Do you spot the issue? Look closely at when we set the alive variable to false. So the first recording upon stopping also causes the nativeMethod to stop consuming CPU-time eventually.

Why does it not always fail?

How does this test case even normally succeed? With a simple print statement, we find that we most often get:

CPUTimeSample event received in recording 1: {

osName = "JFR Periodic Tasks"

osThreadId = 219953

javaName = "JFR Periodic Tasks"

javaThreadId = 51

group = {

parent = {

parent = {

parent = N/A

name = "system"

}

name = "main"

}

name = "AgentVMThreadGroup"

}

virtual = false

}

This thread is not related to our test case at all, but to the internals of the OpenJDK JFR implementation. The thread in question runs (source):

while (true) {

long wait = Options.getWaitInterval(); // 1000ms

try {

synchronized (this) {

if (JVM.shouldRotateDisk()) {

rotateDisk();

}

if (isToDisk()) {

EventLog.update();

}

}

long minDelta = PeriodicEvents.doPeriodic();

wait = Math.min(minDelta, Options.getWaitInterval());

} catch (Throwable t) {

// Catch everything and log, but don't allow it to end the periodic task

Logger.log(JFR_SYSTEM, WARN, "Error in Periodic task: " + t.getMessage());

} finally {

takeNap(wait);

}

}

The inner iteration in our test case usually takes around 0.16ms (median). With our one millisecond sampling interval, we expect to catch this quite often, depending on the scheduler’s patterns. But this is still a test bug, because the test runtime can fluctuate widely by chance. When the test would have worked as intended, nativeMethod would consume CPU-time all the time.

The chaotic scheduler increased the likelihood that the CPU-time profiler would not sample the “JFR Periodic Tasks” thread, though the exact reason is unclear to me.

If I’m honest, without the chaotic scheduler running and showing that the test’s runtime varies widely, I wouldn’t have gone down the path of trying to understand what happened. I would have put the test timeouts down to nothing more than synchronization issues. Heck, the first draft of this blog post even mentioned race conditions in the title.

Conclusion

Of course, the chaotic scheduler is still a prototype. Still, I hope to have shown you that it’s worth exploring for testing related to race conditions, tricky concurrency issues, and creating fast reproducers. The scheduler is written directly in Java, so it would be interesting to integrate with OpenJDK-related tooling. We’re essentially helping to debug a JVM test using a Java library in the kernel.

After Jake Hillion reproduced a kernel bug with his Rust version of the scheduler, this is now the second real-world bug where chaotic scheduling helped. I hope it’s not the last one.

This article is part of my work in the SapMachine team at SAP, making profiling and debugging easier for everyone.